How AI Evaluates Trust in Nonprofits (EEAT in the AI Era)

Artificial intelligence doesn’t just rank nonprofits.

It evaluates whether they deserve to be recommended.

That distinction matters.

In traditional search, your organization competed for rankings. In AI-driven search, you compete for inclusion in summaries, recommendations, and citations.

The bar is higher.

For nonprofits — where donations, legitimacy, and public trust are central — this shift changes everything.

Why Trust Is Different in the AI Era

AI-generated answers don’t simply list websites.

They synthesize information.

When someone searches:

- “Is this charity legitimate?”

- “Best nonprofits supporting food insecurity”

- “Where should I donate for disaster relief?”

AI systems evaluate which organizations demonstrate sufficient trust signals to be surfaced confidently.

This isn’t about keyword density.

It’s about credibility clarity.

If your nonprofit lacks structured, transparent authority signals, AI may exclude you entirely — even if you previously ranked well.

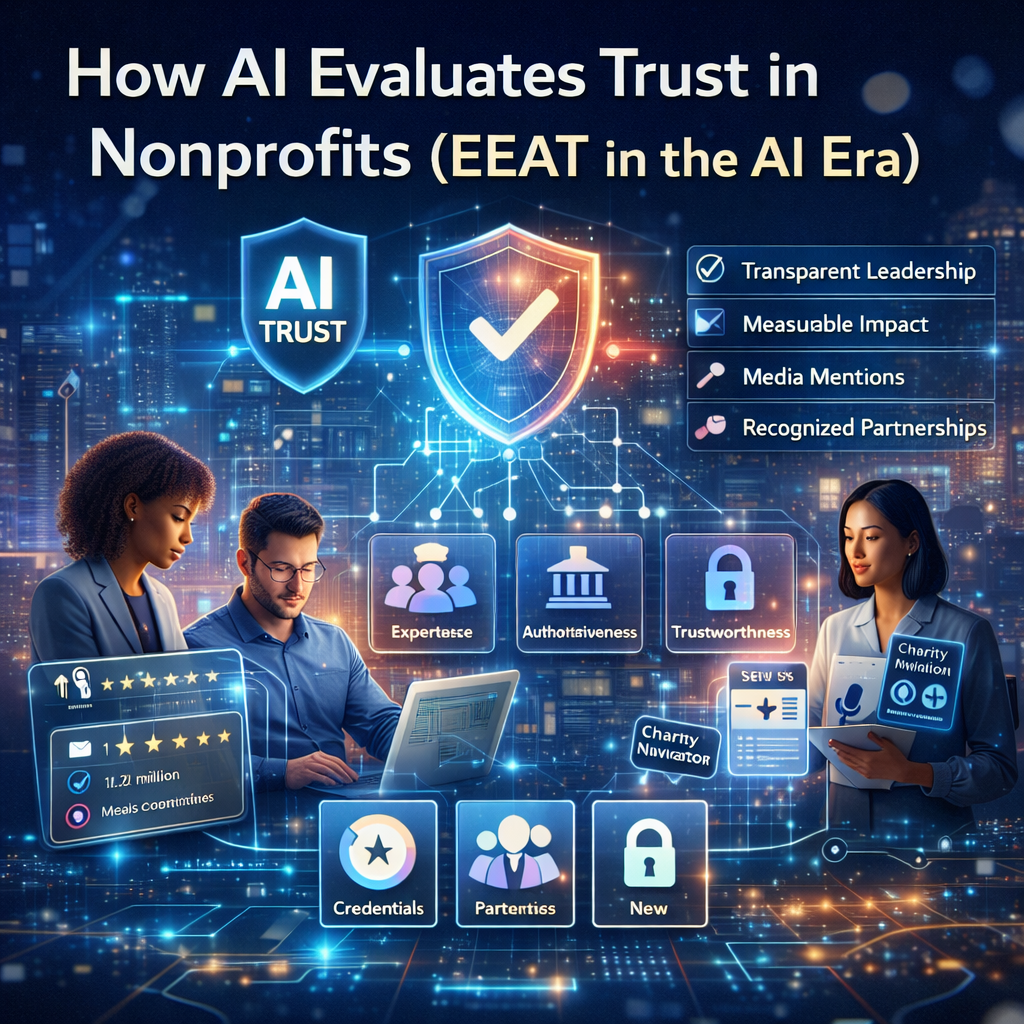

Understanding EEAT in Context

Google’s EEAT framework (Experience, Expertise, Authoritativeness, Trustworthiness) has existed for years.

But AI systems operationalize it differently.

Instead of evaluating a single page in isolation, AI systems:

- Cross-reference your organization across the web

- Compare your messaging for consistency

- Evaluate transparency signals

- Analyze authorship clarity

- Look for documented impact evidence

Nonprofits operate in a higher-trust category by default.

Which means ambiguity is costly.

The 6 Trust Signals AI Looks for in Nonprofits

1️⃣ Clear Organizational Identity

AI needs to clearly understand:

- Who you are

- What you do

- Where you operate

- Who you serve

Vague mission statements dilute entity clarity.

Your homepage should define your nonprofit in two precise sentences.

(For structured clarity improvements, see our [AI Optimization Services Page Placeholder].)

2️⃣ Transparent Leadership & Governance

Anonymous content erodes authority.

Strong trust signals include:

- Executive director bio

- Board member listings

- Credential highlights

- Clear contact information

Transparency reduces ambiguity.

Ambiguity reduces recommendation probability.

3️⃣ Documented, Quantified Impact

Statements like “We change lives” are invisible to AI.

Specific statements like:

“We distributed 1.2 million meals to 4,300 families across three counties in 2025.”

Are extractable.

AI favors structured, measurable outcomes.

4️⃣ Third-Party Validation

AI models cross-reference data.

Trust is reinforced when your nonprofit demonstrates:

- Recognized partnerships

- Media mentions

- Charity Navigator ratings

- Government or foundation grants

- Accreditation listings

Consistency across platforms strengthens authority.

5️⃣ Content Expertise

If you publish blog content:

- Are authors identified?

- Do authors demonstrate subject expertise?

- Are claims supported?

- Is medical/legal/financial content appropriately cautious?

Nonprofits in health, legal, or financial assistance categories are evaluated under even stricter scrutiny.

6️⃣ Consistency Across Channels

AI systems compare:

- Your website

- Your Google Business Profile

- Your grant campaigns

- External mentions

- Social profiles

If your mission description differs everywhere, trust scores weaken.

Consistency compounds credibility.

Where Nonprofits Accidentally Erode Trust

Even well-meaning organizations undermine their authority through:

- Outdated leadership pages

- Broken donation forms

- Missing privacy policies

- Anonymous blog posts

- No structured data

- Inconsistent service area messaging

- Poor grant landing page clarity

AI interprets friction as uncertainty.

And uncertainty reduces recommendation likelihood.

How to Strengthen AI-Readable Trust Signals

Here’s where optimization becomes strategic.

Add Author Schema to Articles

Reinforce real expertise.

Clarify Impact Pages

Structure outcomes with measurable data.

Standardize Mission Language

Use consistent phrasing across:

- Homepage

- About page

- Google Business Profile

- Grant campaigns

Implement Structured Data

At minimum:

- Organization schema

- Article schema

- FAQ schema (when appropriate)

Align AIO with Google Ad Grants

Trust influences:

- Quality Score

- Conversion rate

- Account stability

- Policy compliance

AIO isn’t separate from grants.

It supports them.

(See our [Google Grant Management Page Placeholder] to learn how grant strategy and AI optimization work together.)

Why This Matters for 2026 and Beyond

AI-driven summaries are shaping:

- Donation research

- Volunteer decisions

- Grant applications

- Organizational comparisons

- Legitimacy validation

Nonprofits that proactively strengthen trust signals will:

- Appear in AI summaries

- Be cited in conversational search

- Earn stronger donor confidence

- Improve campaign performance

Those that delay risk becoming invisible.

And invisibility compounds over time.

Final Thought

AI visibility is not about gaming algorithms.

It’s about proving credibility at scale.

Nonprofits already have what AI values most:

- Purpose

- Expertise

- Community impact

The opportunity is not reinvention.

It’s structured trust.

Frequently Asked Questions

What is EEAT and why does it matter for nonprofits?

EEAT stands for Experience, Expertise, Authoritativeness, and Trustworthiness. AI systems use these signals to determine whether a nonprofit should be recommended in search results.

Does AI prioritize larger nonprofits?

Not necessarily. AI prioritizes clarity, consistency, and documented credibility — not organizational size.

How does trust impact Google Ad Grant performance?

Stronger trust signals often improve Quality Score, conversion rates, and compliance stability within Google Ad Grant accounts.